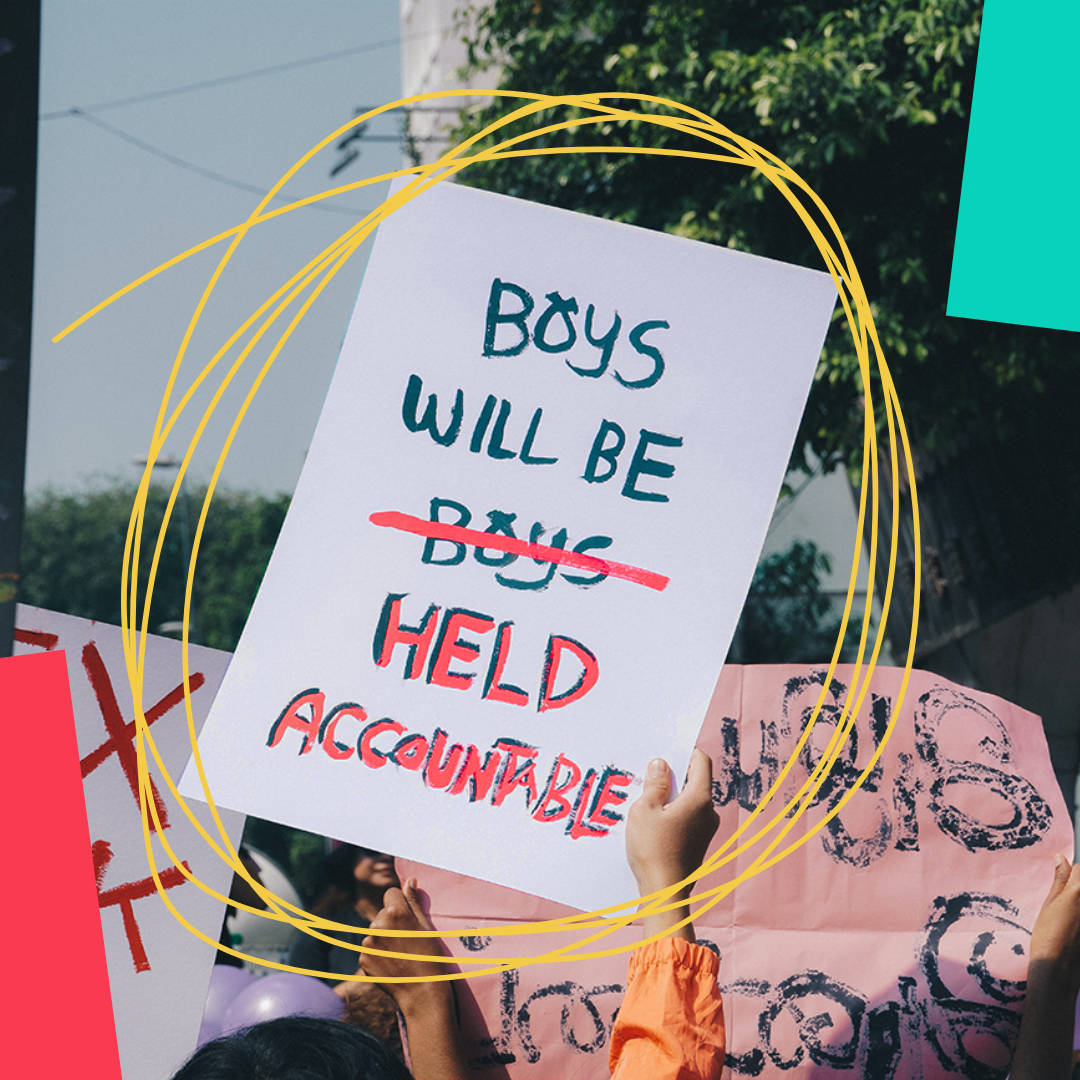

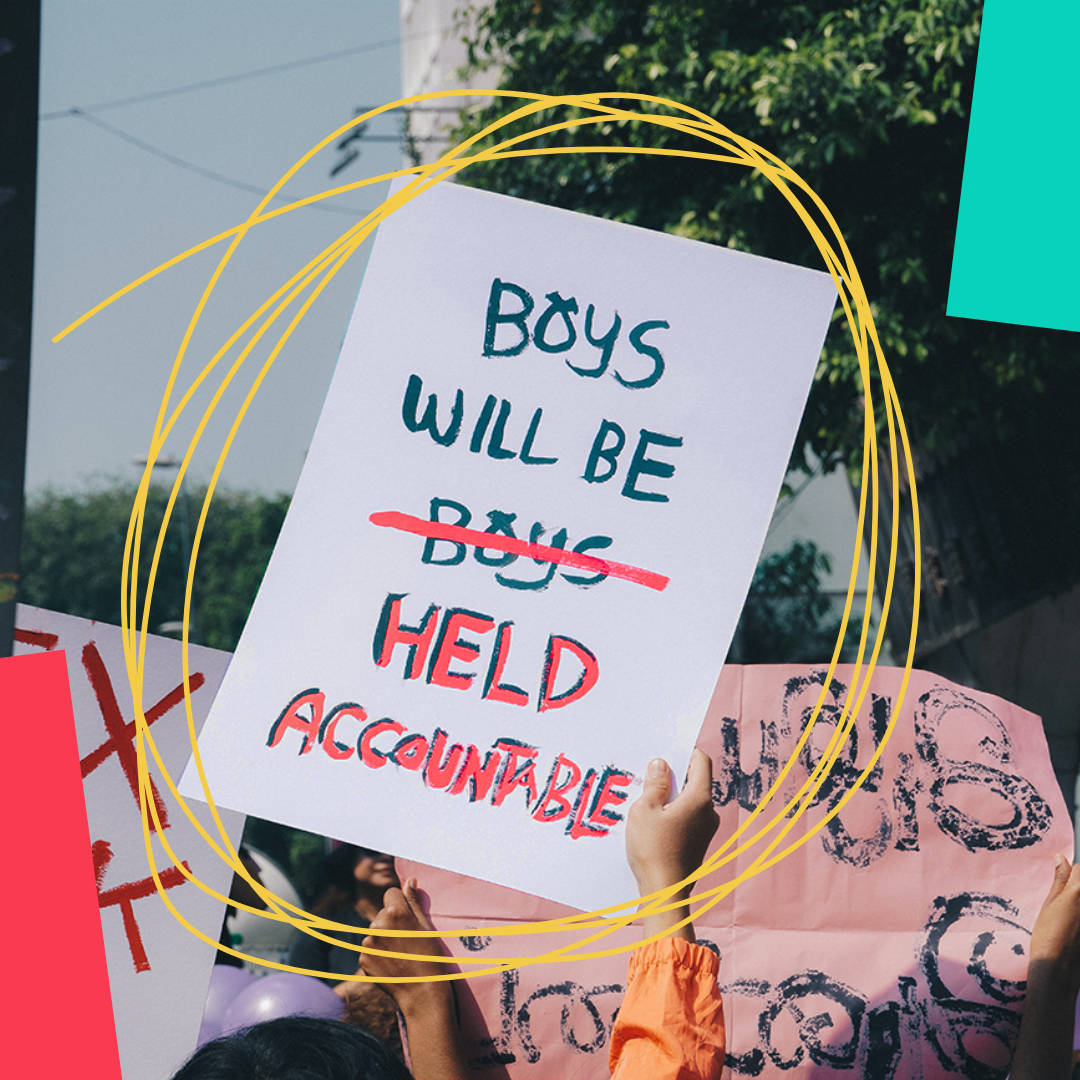

We’re campaigning for the reforms of laws relating to online abuse and robust regulation of the tech platforms perpetrators use, so those responsible are held accountable.

Updates

- March 25, 202673% say government must do more on violence against women and girls, as new report warns of “rapidly evolving” threats driven by AI, online misogyny a...

- February 18, 2026Today (18th February 2026) the government has announced measures requiring tech platforms to take down intimate images shared without a victim’s...

- February 06, 2026Today (6th February 2026), following months of campaigning by survivor Jodie*, End Violence Against Women Coalition, #NotYourPorn, Professor Clare McG...

DOWNLOADS

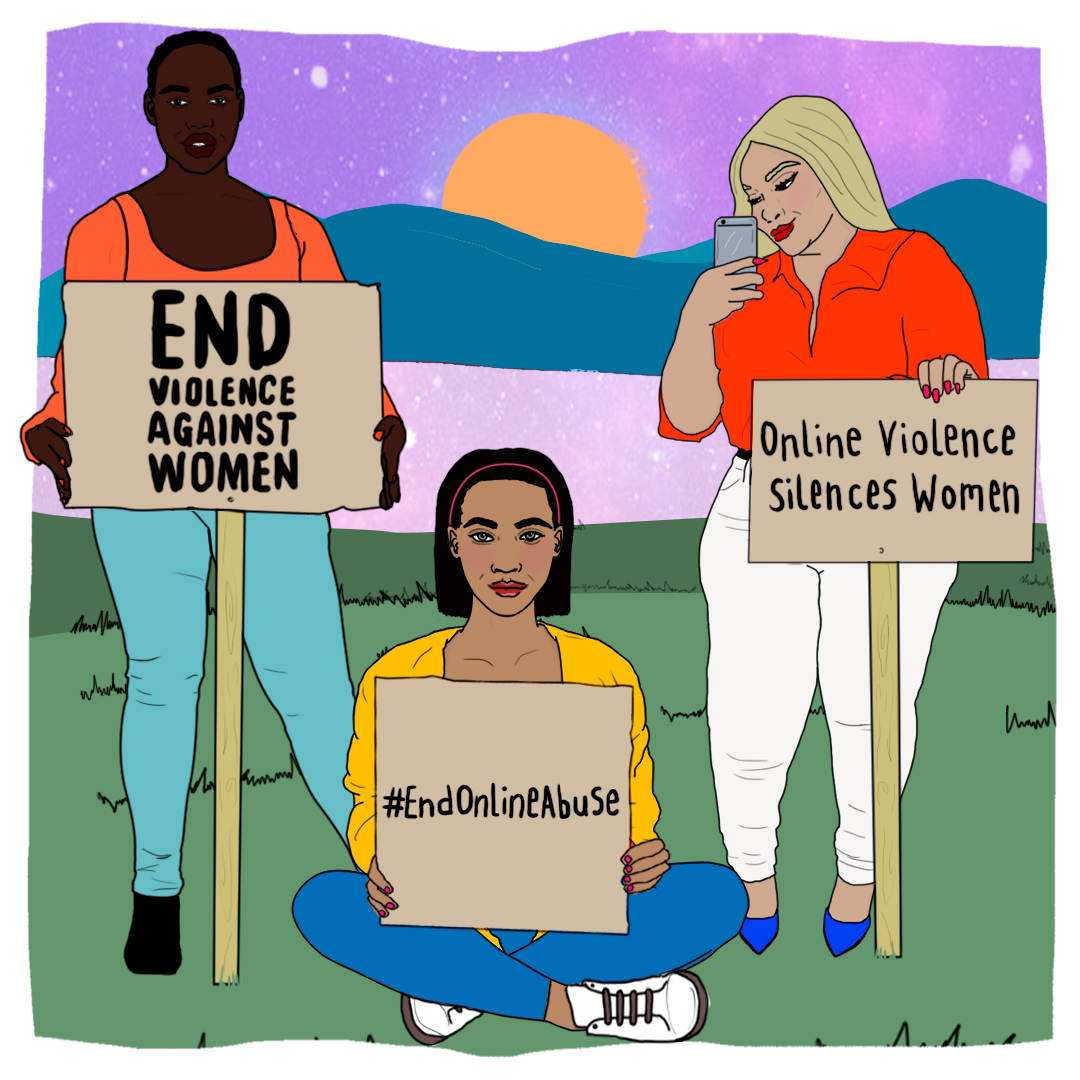

- Women are 27 times more likely than men to be harassed online.

- 1 in 5 women have experienced online harassment or abuse.

- Black women are 84% more likely to receive abusive or problematic tweets than white women.

Online abuse includes cyberstalking, sexual harassment, grooming for exploitation or abuse, image-based sexual abuse (so called ‘revenge porn’, upskirting, sexually explicit deepfakes, sexual extortion and videos of sexual assaults and rapes), rape threats, doxxing of women’s personal information, tech abuse in intimate partnerships, and much more. This abuse can have a long-lasting, devastating impact on survivors.

We should all be able to socialise, work, learn, get involved in activism or join a community online, free from the threat of abuse. But women and girls’ rights and freedoms are being restricted online, as we self-censor, avoid certain platforms or come offline to avoid harm. And this issue is only worsening as our online world becomes ever more interwoven in our daily lives.

We won our campaign to include women and girls in the new online safety law – now we’re calling for the law to go further and #StopImageBasedAbuse by:

- Strengthening criminal laws

- Creating ways for survivors to use civil law to get images taken down

- Regulating tech companies and hold them accountable for preventing the image-based abuse they profit from

- Properly funding specialist support services that are a lifeline for survivors

- Educating young people through quality relationships and sex education

OTHER CAMPAIGNS